War on fake news could be won with the help of behavioral science

- Written by Gleb Tsipursky, Assistant Professor of History of Behavioral Science, The Ohio State University

Facebook CEO Mark Zuckerberg recently acknowledged[1] his company’s responsibility in helping create the enormous amount of fake news that plagued the 2016 election – after earlier denials[2]. Yet he offered no concrete details on what Facebook could do about it.

Fortunately, there’s a way to fight fake news that already exists and has behavioral science on its side: the Pro-Truth Pledge[3] project.

I was part of a team of behavioral scientists that came up with the idea of a pledge as a way to limit the spread of misinformation online. Two studies that tried to evaluate its effectiveness suggest it actually works.

Facebook’s Mark Zuckerberg has been on the spot lately to come up with ways for his company to fight misinformation on his platform.

Reuters/Stephen Lam

Facebook’s Mark Zuckerberg has been on the spot lately to come up with ways for his company to fight misinformation on his platform.

Reuters/Stephen Lam

Fighting fake news

A growing number of American lawmakers and ordinary citizens believe social media companies like Facebook and Twitter need to do more to fight the spread of fake news – even if it results in censorship.

A recent survey[4], for example, showed that 56 percent of respondents say tech companies “should take steps to restrict false info online even if it limits freedom of information.”

But what steps they could take – short of censorship and government control – is a big question.

Before answering that, let’s consider how fake news spreads. In the 2016 election, for example, we’ve learned that a lot of misinformation[5] was a result of Russian bots that used falsehoods[6] to try to exacerbate American religious and political divides.

Yet the posts made by bots wouldn’t mean much unless millions of regular social media users chose to share the information. And it turns out ordinary people spread misinformation[7] on social media much faster and further than true stories.

In part, this problem results from people sharing stories without reading them[8]. They didn’t know they were spreading falsehoods.

However, 14 percent of Americans surveyed in a 2016 poll[9] reported knowingly sharing fake news. This may be because research shows people are more likely[10] to deceive others when it benefits[11] their political party or other group to which they belong, especially when they see others from that group sharing misinformation.

Fortunately, people also have a behavioral tic that can combat this: We want to be perceived as honest. Research has shown that people’s incentive to lie decreases when they believe there is a higher risk[12] of negative consequences, are reminded[13] about ethics, or commit[14] to behaving honestly.

That’s why honor codes reduce cheating[15] and virginity pledges delay[16] sexual onset.

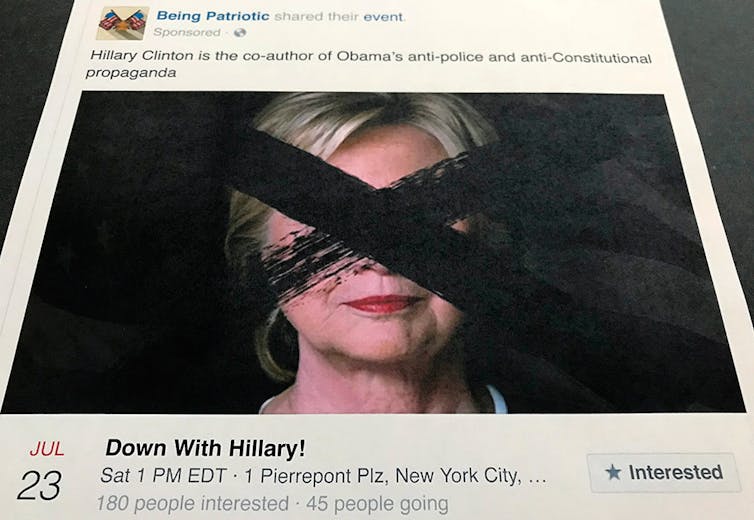

‘Being Patriotic’ was a Facebook page that reportedly was run by Russian provocateurs trying to influence the 2016 election. But it would have gone nowhere had regular users not shared it.

AP Photo/Jon Elswick

‘Being Patriotic’ was a Facebook page that reportedly was run by Russian provocateurs trying to influence the 2016 election. But it would have gone nowhere had regular users not shared it.

AP Photo/Jon Elswick

Taking the pledge

That’s where the “pro-truth pledge” comes in.

Appalled by the misinformation that characterized both the U.S. elections and U.K. Brexit campaign[17], a group of behavioral scientists at The Ohio State University and the University of Pennsylvania, including me, wanted to create a tool to fight misinformation. The pledge, launched in December 2016, is a project of a nonprofit I co-founded called Intentional Insights[18].

The pledge aims to promote honesty by asking people to commit to 12 behaviors that research shows correlate with an orientation toward truthfulness. For example, the pledge asks takers to fact-check information before sharing it, cite sources, ask friends and foes alike to retract info shown to be false, and discourage others from using unreliable news sources.

So far, about 6,700 people and organizations have taken the pledge, including American social psychologist Jonathan Haidt[19], Australian moral philosopher Peter Singer[20], Media Bias/Fact Check[21] and U.S. lawmakers Beto O’Rourke[22], Matt Cartwright[23] and Marcia Fudge[24].

About 10 months after launching the pledge, my colleagues and I wanted to evaluate whether in fact it has been effective at changing behavior and reducing the spread of unverified news. So we conducted two studies comparing pledge-takers’ sharing on Facebook. To add a little outside perspective, we included a researcher from the University of Stuttgart who did not take part in creating the pledge.

In one study[25], we asked participants to fill out a survey evaluating how well their sharing of information on their own and others’ profile pages aligned with the 12 behaviors outlined in the pledge a month before and after they signed it. The survey revealed large and statistically significant changes in behavior, including more thorough fact-checking[26], a growing reluctance[27] to share emotionally charged posts, and a new tendency[28] to push back against friends who shared information.

While self-reporting is a well-accepted methodology that emulates the approach of studies on honor codes[29] and virginity pledges[30], it’s subject to the potential bias[31] of subjects reporting desirable changes – such as more truthful behaviors – regardless of whether these changes are present.

So in a second study[32] we got permission from participants to observe their actual Facebook sharing. We examined the first 10 news-relevant posts one month after they took the pledge and graded the quality of the information shared, including the links, to determine how closely their posts matched the behaviors of the pledge. We then looked at the first 10 news-relevant posts 11 months before they took the pledge and rated those. We again found large, statistically significant changes in pledge-takers’ adherence to the 12 behaviors, such as fewer posts containing misinformation and including more sources.

Clarifying ‘truth’

The reason the pledge works, I believe, is because it replaces the fuzzy concept of “truth,” which people can interpret differently, with clearly observable behaviors, such as fact-checking before sharing, differentiating one’s opinions from the facts and citing sources.

The pledge we developed is only one part of a larger effort to fight misinformation. Ultimately, this shows that simple tools exist and can be used by Facebook and other social media companies to battle the onslaught of misinformation people face online, without resorting to censorship.

References

- ^ recently acknowledged (www.thewrap.com)

- ^ earlier denials (www.facebook.com)

- ^ Pro-Truth Pledge (www.protruthpledge.org)

- ^ recent survey (www.journalism.org)

- ^ lot of misinformation (www.nytimes.com)

- ^ used falsehoods (www.washingtonpost.com)

- ^ spread misinformation (science.sciencemag.org)

- ^ without reading them (datascience.columbia.edu)

- ^ 2016 poll (www.journalism.org)

- ^ more likely (journals.sagepub.com)

- ^ it benefits (journals.ama.org)

- ^ higher risk (www-2.rotman.utoronto.ca)

- ^ are reminded (journals.ama.org)

- ^ commit (psychodramaaustralia.edu.au)

- ^ reduce cheating (www.tandfonline.com)

- ^ delay (www.journals.uchicago.edu)

- ^ U.K. Brexit campaign (www.independent.co.uk)

- ^ Intentional Insights (intentionalinsights.org)

- ^ Jonathan Haidt (en.wikipedia.org)

- ^ Peter Singer (en.wikipedia.org)

- ^ Media Bias/Fact Check (mediabiasfactcheck.com)

- ^ Beto O’Rourke (orourke.house.gov)

- ^ Matt Cartwright (cartwright.house.gov)

- ^ Marcia Fudge (fudge.house.gov)

- ^ one study (papers.ssrn.com)

- ^ more thorough fact-checking (medium.com)

- ^ growing reluctance (imgur.com)

- ^ new tendency (bijonbo.blogspot.com)

- ^ honor codes (www.middlebury.edu)

- ^ virginity pledges (www.journals.uchicago.edu)

- ^ potential bias (www.sciencedirect.com)

- ^ second study (papers.ssrn.com)

Authors: Gleb Tsipursky, Assistant Professor of History of Behavioral Science, The Ohio State University

Read more http://theconversation.com/war-on-fake-news-could-be-won-with-the-help-of-behavioral-science-96093